tl;dr: We've put together this post to share resources and tips that might be useful for people writing criticism or working on a red team of existing work.

We’re releasing this as a companion document to the announcement of the Criticism and Red Teaming Contest, but we hope this resource will be useful beyond that specific occasion.[1]

We’re also hoping to grow and improve this resource after posting it, so please feel free to suggest additions and changes.

Why criticisms?

In brief, we think:

- The people involved in the project of “effective altruism” are probably getting many things wrong. Noticing those errors (and course-correcting) matters for the project of actually doing the most good we can.

- There are reasons to expect good criticism to be under-supplied relative to its value.

- Many people feel tense and stressed about writing something critical, and we hope this resource will help them.

You can see more of our rationale here.

Existing resources

Note that some of the links here appear again below. If you know of other resources, please let us know!

Guides and collections

- Relevant topics on the Forum

- Red teaming

- And other useful topic pages: Epistemic deference, Criticism of effective altruism, Criticism of longtermism and existential risk studies

- And on LessWrong: Epistemic Review, Epistemic Spot Check, Disagreement

- Red Teaming Handbook, 3rd Edition

- A particularly useful excerpt starts on page 71: “Argument Mapping”

- Outline

- Chapters 1-2, 7: Introduction

- Chapters 3-6: Mindset

- Chapters 8-9: Tools

- Note: we’re happy to pay a $200 bounty for notes on the Red Teaming Handbook that we’d like to add to this post.

- Minimal-trust Investigations (Holden Karnofsky)

- Scout Mindset (Julia Galef — you can get a free copy here, find a review here, and see a short clip discussing the core points here)

- Slides from a presentation that Lizka, Charlotte Siegmann, Joshua Monrad, and Fin Moorhouse gave at EA Global: London 2022.

Assorted other writing

Some writing on criticism that we appreciate.

- Arguing without warning (Dynomight)

- I think it's great to have your work criticized by strangers online (Andrew Gelman)

- Negativity (when applied with rigor) requires more care than positivity (Andrew Gelman)

- Six Ways To Get Along With People Who Are Totally Wrong* (Rob Wiblin)

- Challenges of Transparency (Open Philanthropy), from Notable Lessons

- Butterfly Ideas (Elizabeth van Nostrand)

- Epistemic Legibility (Elizabeth van Nostrand)

- The motivated reasoning critique of effective altruism (Linch Zhang)

- Noticing the skulls, longtermism edition (David Manheim)

- Liars (Kelsey Piper)

Models and types of criticisms

Some useful resources:

- Four categories of effective altruism critiques (Joshua Monrad)

- Minimal-trust Investigations (Holden Karnofsky)

- Epistemic spot checks (Elizabeth van Nostrand)

Minimal trust investigations

A minimal trust investigation (MTI) involves suspending your trust in others' judgments and trying to understand the case for and against some claim yourself, ideally to the point where you can keep up with experts and form an independent judgment.

In his post about MTIs, Holden Karnofsky explains that they are, “not the same as taking a class, or even reading and thinking about both sides of a debate”. They are more thorough and skeptical, interrogating claims as far down as possible.

MTIs are useful when you identify some claim in EA that seems crucial to you, but hasn’t been scrutinized enough, and you’re genuinely unsure about its truth. When you embark on an MTI, you shouldn’t feel confident about the outcome: that’s the point!

For the contest: If you conduct something like an MTI focused on a claim you’re unsure about, and you end up agreeing with the claim, that still counts as critical work in our books: agreeing with the original conclusion will not count against you. We do not want to stack the deck in favour of finding the contrarian outcome here: what matters is getting a bit closer to the true outcome. Taking a critical stance towards a claim is one way of doing that.

Note that MTIs are typically extremely hard work!

Red teaming

‘Red teaming’ is the practice of “subjecting [...] plans, programmes, ideas and assumptions to rigorous analysis and challenge”. In other words, a red team sets out to describe the strongest reasonable case against something, and to identify its weakest components. Red teams are used in government agencies (especially defense and intelligence) and by commercial companies. What makes red teaming distinctive is that you don’t need to think the object of the red team is misguided to think that red teaming can be useful. As well as helping others form an overall assessment of some practice or claim, red teams can help spot potential failure modes, and how to avoid them.

Red teams can be arbitrarily technical and narrow: you’re trying to give useful information to the relevant actors, not necessarily to a general audience. To this end, red teaming might be an especially good way to skill up as an early-career researcher, since the skills required to effectively scrutinize an existing idea or project are very similar to the skills required to generate new ones.[2]

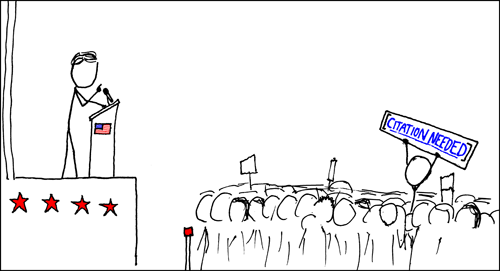

Fact checking and chasing citation trails

Some key claims in EA snowball: they’re suggested tentatively or for the sake of example, but soon become canonical, and inform other research. If you notice claims that seem crucial, but whose origin is unclear, it can pay to track down the source and evaluate its legitimacy.

You might discover that the claim really is definitely true, or that it actually rests on shakier or more complicated evidence than its proponents suggest. In rare cases you might find that the claim is in fact unsubstantiated. All these things are useful to know!

One example is Saulius’ effort to fact-check comparisons between the relative cost-effectiveness of trachoma surgeries and guide dogs.

Adversarial collaboration

An adversarial collaboration is where two domain experts with opposing views work together to clarify their disagreements. This is not the same as a debate: the goal is not to persuade the other side. A successful adversarial collaboration might instead produce: a description of a hypothetical experiment that could resolve the disagreement; an empirical forecast which the parties would take either side of; or cases which mostly sharply draw out the disagreement.

You could record a conversation and write up an edited transcript, or work together on a post. A neutral moderator might help facilitate the process.

Phil Tetlock writes[3] that adversarial collaborations are “least feasible when most needed” (and vice-versa, and that the highest expected returns lie in the “murky middle” in which theory testing conditions are difficult, but not yet hopeless.

Clarifying confusions

Sometimes, you might just be confused about something — why do most people in EA seem to agree that X is more important than Y? Why are they ignoring Z? This might not amount to a fully-fledged criticism (you’re not necessarily arguing Y should be prioritized over X) because you’re not sufficiently confident. You might also be confused in more basic ways: how is this argument for X supposed to work? What’s up with Y in the first place? Why does nobody seem to be talking about Z?

You might not resolve the confusion, but you could try clarifying what is unclear to you, and consider what might resolve that confusion.

It’s probably the case that points of confusion go under-reported. It can be embarrassing to announce that you're simply confused about some assumption that everyone else seems to regard as obvious. We expect there's some amount of pluralistic ignorance at play here, and the less of this the better.

Evaluations of organizations

Some of the most directly useful criticism that has been posted on the Forum is aimed at evaluating specific organizations; including their (implicit) theory of change, key claims, and their track record – and suggesting concrete changes where relevant. Here’s an incomplete list of examples:

- Why I'm concerned about Giving Green (Alex Lawsen)

- Two tentative concerns about OpenPhil's Macroeconomic Stabilization Policy work (Remmelt Ellen)

- Shallow evaluations of longtermist organizations (Nuño Sempere)

- Why we have over-rated Cool Earth (Sanjay Joshi)

- A Red-Team Against the Impact of Small Donations (Applied Divinity Studies)

- External Evaluation of the EA Wiki (Nuño Sempere)

- The EA Community and Long-Term Future Funds Lack Transparency and Accountability (Evan Gaensbauer)

Sometimes we make mistakes that aren’t obvious from the inside but can be pointed out by outside observers. This could be especially true for longtermist projects, which may have fewer obvious feedback loops.

We’d encourage a norm of reaching out to the project or org before publishing critical evaluations. For instance, it may be the case that the org in question has private information that answers some of your critical points. We’d also encourage a norm of organizations welcoming critical evaluations!

(In fact, it would be great to see representatives of EA organizations invite scrutiny of their own projects – you could do so in the comments here or with your own Forum post. You might also consider listing key questions that you’d most like to see examined.

At the same time, let’s recognize that it’s often very easy to find holes in early-stage orgs, and we don’t want scrutiny to shade into the kind of hostility that could make promising organizations less likely to succeed. Where you end up being impressed, say as much.)

Steelmanning, summarizing, and ‘translating’ existing criticism for an EA audience

You might also have encountered an idea from ‘outside’ the EA community that you think contains a useful insight. We’d love to see work succinctly explaining these existing ideas, and constructing the strongest versions (‘steelmanning’ them). You might consider doing this in collaboration with a domain expert who does not consider themselves part of the EA community.

We’d also love to see summaries and distillations of all kinds of longer work (such as books and reports) with critical perspectives on some aspect of effective altruism, that draws out key lessons, and perhaps also comments on your own reaction.

This qualifies for the criticisms contest.

Some good qualities of criticism (and how to have them)

1. Good choice of topic

Targeting real topics

If I constructed a beautiful, solid, and clear argument for why the Centre for Effective Altruism should not fund amusement parks, I would not be doing useful work.

Why? I’d have criticized something that’s not real: the Centre for Effective Altruism doesn’t fund amusement parks and probably never will.

This is an extreme example, but analogous things happen. One common phenomenon is criticizing a “strawman” — a weaker or poorer misrepresentation of the target.

How to combat this? Here are some suggestions:

- Make a concerted effort to understand and “steelman” the position you’re arguing against — that is, respond to the most reasonable version of the position that you can imagine.

- Talk to someone who holds the view you’re criticizing, and really listen.

- Send your criticism to the organization you’re criticizing and see if they disagree with critical points.

- Check that you haven’t simply misrepresented or missed important points, especially ones that may have required a certain amount of context.

Targeting something important or action-relevant

Nit-picking isn’t as useful as identifying serious weaknesses or how an important crux breaks down. Here’s a relevant page, with some useful materials.

Some specific things you might want to criticize

Here is a list of topics and works that we think clearly pass the above criteria (compiled by Training for Good). You could also look at some compiled lists of the most important and influential readings for effective altruism, like this list, the results of the Decade Review, resources and books listed on effectivealtruism.org, and the EA Handbook. This is obviously not a comprehensive list, but it’s a start. There are also some crowd-sourced or user-produced lists of topics you could explore, like the answers to this question.

For the contest: if you’re unsure about whether something is a good topic to red team or criticize, please feel free to reach out to us.

2. Reasoning transparency and epistemic legibility

- Reasoning Transparency (Open Philanthropy)

- Epistemic Legibility (Elizabeth van Nostrand)

This is also related to scout mindset, discussed in the “Guides” section.

For the contest: we view this as one of the most important criteria for submissions.

3. Constructive

The work is decision-relevant (directly or indirectly). Bonus points for concrete solutions.

It is often much more difficult to suggest improvements that actually work than it is to point out flaws in current implementations. Making an effort to suggest concrete changes helps correct for this bias, which might otherwise skew critical work towards an unproductive kind of cynicism.

4. Aware of prior work

Take some time to check that you’re not missing an existing response to your argument. If responses do exist, mention (or engage with) them. (Here’s a relevant post discussing failure modes around this issue, by Scott Alexander.)

5. All the usual good discourse norms (civility, etc.)

You can see the Forum’s discourse norms here: What we’re aiming for - EA Forum. Criticisms aren’t exempt from discourse norms.

6. Other potentially important qualities

- Novelty. The piece presents new arguments, or otherwise presents familiar ideas in a new way. Note that it’s often still valuable to distill or “translate” existing criticisms to support better communication of the ideas.

- Focused. Critical work is often (but not always) most useful when it is focused on a small number of arguments, and a small number of objects. We’d love to see (and we’re likely to reward) work that engages with specific texts, strategic choices, or claims.

- Not “punching down.” For instance, if you’re very “established” in the community, you probably shouldn’t target someone’s first EA Forum post.

Please let us know if you think something should be on this list that isn’t!

Special thanks to Cillian Crosson for helpful feedback and resources! We're also grateful to everyone whose resources we're sharing.

- ^

We think collections and resources can often be useful.

- ^

We recommend Linch’s shortform post on ‘red teaming papers as an EA training exercise’, and Cillian Crosson’s post explaining the ‘Red Team Challenge’.

- ^

I don't think you mentioned the Ideological Turing Test and Chesterton's fence, but I think they may be worth adding.

These two concepts have really helped me make sure I understand (instead of unconsciously misrepresenting) ideas I don't agree with.

Julia Galef eloborates on both concepts well in her book Scout Mindset (mentioned above).

P.S. I really liked this post, it was very concise and helpful for me.

I took some rough notes while reading this handbook (mostly copy & pasted from the handbook itself). Happy for someone to use this as a jumping off point and claim the bounty for themselves :)

This is incredibly good and densely packed content in a short post.

I thought the content "Assorted other writing" is fantastic.

This has the value of showing great articles, and also presents writers who are less cited on the forum, so it has some "explorability" (although who knows if this Gelman guy is any good).