(Cross-posted from the sideways view.)

For most people I don't think it's important to have a really precise definition of integrity. But if you really want to go all-in on consequentialism then I think it's useful. Otherwise you risk being stuck with a flavor of consequentialism that is either short-sighted or terminally timid.

I.

I aspire to make decisions in a pretty simple way. I think about the consequences of each possible action and decide how much I like them; then I select the action whose consequences I like best.

To make decisions with integrity, I make one change: when I imagine picking an action, I pretend that picking it causes everyone to know that I am the kind of person who picks that option.

If I'm considering breaking a promise to you, and I am tallying up the costs and benefits, I consider the additional cost of you having known that I would break the promise under these conditions. If I made a promise to you, it's usually because I wanted you to believe that I would keep it. So you knowing that I wouldn't keep the promise is usually a cost, often a very large one.

- Optimal summary of this post.

If I'm considering sharing a secret you told me, and I am tallying up the costs and benefits, I consider the additional cost of you having known that I would share this secret. In many cases, that would mean that you wouldn't have shared it with me---a cost which is usually larger than whatever benefit I might gain from sharing it now.

If I'm considering having a friend's back, or deciding whether to be mean, or thinking about what exactly counts as "betrayal," I'm doing the same calculus. (In practice there are many cases where I am pathologically unable to be really mean. One motivation for being really precise about integrity is recovering the ability to engage in normal levels of being a jerk when it's actually a good idea.)

This is a weird kind of effect, since it goes backwards in time and it may contradict what I've actually seen. If I know that you decided to share the secret with me, what does it mean to imagine my decision causing you not to have shared it?

It just means that I imagine the counterfactual where you didn't share the secret, and I think about just how bad that would have been---making the decision as if I did not yet know whether you would share it or not.

I find the ideal of integrity very viscerally compelling, significantly moreso than other abstract beliefs or principles that I often act on.

II.

This can get pretty confusing, and at the end of the day this simple statement is just an approximation. I could run through a lot of confusing examples and maybe sometime I should, but this post isn't the place for that.

I'm not going to use some complicated reasoning to explain why screwing you over is consistent with integrity, I am just going to be straightforward. I think "being straightforward" is basically what you get if you do the complicated reasoning right. You can believe that or not, but one consequence of integrity is that I'm not going to try to mislead you about it. Another consequence is that when I'm dealing with you, I'm going to interpret integrity like I want you to think that I interpret it.

Integrity doesn't mean merely keeping my word. To the extent I want to interact with you I will be the kind of person you will be predictably glad to have interacted with. To that end, I am happy to do nice things that have no direct good consequences for me. I am hesitant to be vengeful; but if I think you've wronged me because you thought it would have no bad consequences for you, I am willing to do malicious things that have no direct good consequences for me.

On the flip side, integrity does not mean that I always keep my word. If you ask me a question that I don't want to answer, and me saying "I don't think I should answer that" would itself reveal information that I don't want to reveal, then I will probably lie. If I say I will do something then I will try to do it, but it just gets tallied up like any other cost or benefit, it's not a hard-and-fast rule. None of these cases are going to feel like gotchas; they are easy to predict given my definition of integrity, and I think they are in line with common-sense intuitions about being basically good.

Some examples where things get more complicated: if we were trying to think of the same number between 1 and 20, I wouldn't assume that we are going to win because by choosing 17 I cause you to know that I'm the kind of person who picks 17. And if you invade Latvia I'm not going to bomb Moscow, assuming that by being arbitrarily vindictive I guarantee your non-aggression. If you want to figure out what I'd do in these cases, think UDT + the arguments in the rest of this post + a reasonable account of logical uncertainty. Or just ask. Sometimes the answer in fact depends on open philosophical questions. But while I find that integrity comes up surprisingly often, really hard decision-theoretic cases come up about as rarely as you'd expect.

A convenient thing about this form of integrity is that it basically means behaving in the way that I'd want to claim to behave in this blog post. If you ask me "doesn't this imply that you would do X, which you only refrained from writing down because it would reflect poorly on you?" then you've answered your own question.

III.

Why would I do this? At face value it may look a bit weird. People's expectations about me aren't shaped by a magical retrocausal influence from my future decision. Instead they are shaped by a messy basket of factors:

- Their past experiences with me.

- Their past experiences with other similar people.

- My reputation.

- Abstract reasoning about what I might do.

- Attempts to "read" my character and intentions from body language, things I say, and other intuitive cues.

- (And so on.)

In some sense, the total "influence" of these factors must add up to 100%.

I think that basically all of these factors give reasons to behave with integrity:

- My decision is going to have a causal influence on what you think of me.

- My decision is going to have a causal influence on what you think of other similar people. I want to be nice to those people. But also my decision is correlated with their decisions (moreso the more they are like me) and I want them to be nice to me.

- My decision is going to have a direct effect on my reputation.

- My decision has logical consequences on your reasoning about my decision. After all, I am running a certain kind of algorithm and you have some ability to imperfectly simulate that algorithm.

- To the extent that your attempts to infer my character or intention are unbiased, being the kind of person who will decide in a particular way will actually cause you to believe I am that kind of person.

- (And so on.)

The strength of each of those considerations depends on how significant each factor was in determining their views about me, and that will vary wildly from person to person and case to case. But if the total influence of all of these factors is really 100%, then just replacing them all with a magical retrocausal influence is going to result in basically the same decision.

Some of these considerations are only relevant because I make decisions using UDT rather than causal decision theory. I think this is the right way to make decisions (or at least the way that you should decide to make decisions), but your views my vary. At any rate, it's the way that I make decisions, which is all that I'm describing here.

IV.

What about a really extreme case, where definitely no one will ever learn what I did, and where they don't know anything about me, and where they've never interacted with me or anyone similar to me before? In that case, should I go back to being a consequentialist jerk?

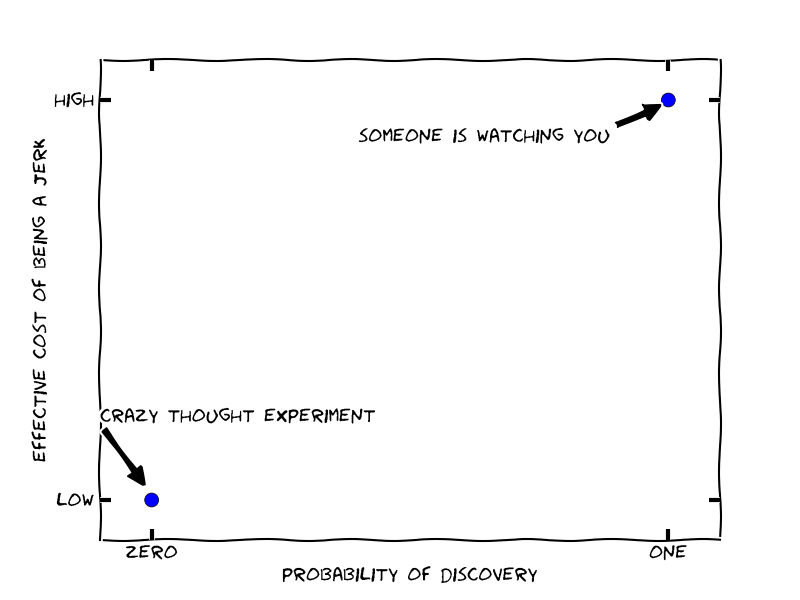

There is a temptation to reject this kind of crazy thought experiment---there are never literally zero causal effects. But like most thought experiments, it is intended to explore an extreme point in the space of possibilities:

Of course we don't usually encounter these extreme cases; most of our decisions sit somewhere in between. The extreme cases are mostly interesting to the extent that realistic situations are in between them and we can usefully interpolate.

For example, you might think that the picture looks something like this:

On this perspective, if I would be a jerk when definitely for sure no one will know then presumably I am at least a little bit of a jerk when it sure seems like no one will know.

But actually I don't think the graph looks like this.

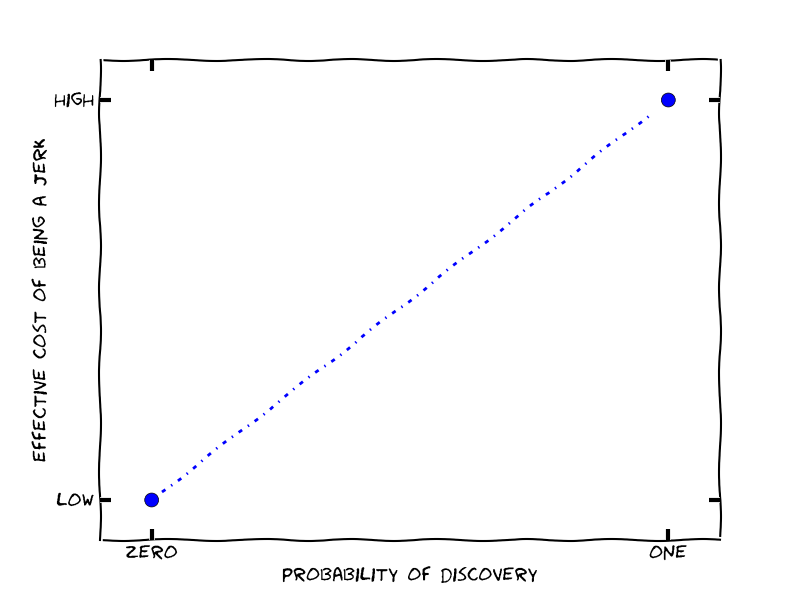

Suppose that Alice and Bob interact, and Alice has either a 50% or 5% chance of detecting Bob's jerk-like behavior. In either case, if she detects bad behavior she is going to make an update about Bob's characteristics. But there are several reasons to expect the 5% chance will have a 10x larger update if it actually happens:

- If Alice is attempting to impose incentives to elicit pro-social behavior from Bob, then the size of the disincentive needs to be 10x larger. This effect is tempered somewhat if imposing twice as large a cost is more than twice as costly for Alice, but still we expect a significant compensating factor.

- For whatever reference class Alice is averaging over (her experiences with Bob, her experiences with people like Bob, other people's experiences with Bob...) Alice has 1/10th as much data about cases with a 5% chance of discovery, and so (once the total number of data points in the class is reasonably large) each data point has nearly 10x as much influence.

- In general, I think that people are especially suspicious of people cheating when they probably won't get caught (and consider it more serious evidence about "real" character), in a way that helps compensate for whatever gaps exist in the last two points.

In reality, I think the graph is closer to this:

Our original thought experiment is an extremely special case, and the behavior changes rapidly as soon as we move a little bit away from it.

At any rate, these considerations significantly limit the applicability of intuitions from pathological scenarios, and tend to push optimal behavior closer to behaving with integrity.

This effect is especially pronounced when there are many possible channels through which my behavior can effect others' judgments, since then a crazy extreme case must be extreme with respect to every one of these indicators: my behavior must be unobservable, the relevant people must have no ability to infer my behavior from tells in advance, they must know nothing about the algorithm I am running, and so on.

V.

Integrity has one more large advantage: it is often very efficient. Being able to make commitments is useful, as a precondition for most kinds of positive-sum trade. Being able to realize positive-sum trades, without needing to make explicit commitments, is even more useful. (On the revenge side things are a bit more complicated, and I'm only really keen to be vengeful when the behavior was socially inefficient in addition to being bad for my values.)

I'm generally keen to find efficient ways to do good for those around me. For one, I care about the people around me. For two, I feel pretty optimistic that if I create value, some of it will flow back to me. For three, I want to be the kind of person who is good to be around.

So if the optimal level of integrity from a social perspective is 100%, but from my personal perspective would be something close to 100%, I am more than happy to just go with 100%. I think this is probably one of the most cost-effective ways I can sacrifice a (tiny) bit of value in order to help those around me.

On top of that:

- Integrity is most effective when it is straightforward rather than conditional.

- "Behave with integrity" is a whole lot simpler (computationally and psychologically) than executing a complicated calculation to decide exactly when you can skimp.

- Humans have a bunch of emotional responses that seem designed to implement integrity---e.g. vengefulness or a desire to behave honorably---and I find that behaving with integrity also ticks those boxes.

After putting all of this together, I feel like the calculus is pretty straightforward. So I usually don't think about it, and just (aspire to) make decisions with integrity.

VI.

Many consequentialists claim to adopt firm rules like "my word is inviolable" and then justify those rules on consequentialist grounds. But I think on the one hand that approach is too demanding---the people I know who take promises most seriously basically never make them---and on the other it does not go far enough---someone bound by the literal content of their word is only a marginally more useful ally than someone with no scruples at all.

Personally, I get a lot of benefit from having clear definitions; I feel like the operationalization of integrity in this post has worked pretty well, and much better than the deontological constraints it replaced. That said, I'm always interested in adopting something better, and would love to hear pushback or arguments for alternative norms.

I still broadly endorse this post. Here are some ways my views have changed over the last 6 years:

What arguments/evidence caused you to be more hesitant about retaliation?

Nice post!

In case someone is wondering:

We could decline to answer some questions that aren't too revealing so that people won't know which is which. The cost of hiding some innocuous things seems much lower than the benefit of being trusted.

This is an excellent post. I've been struggling myself to understand to what extend deontological values and the inherent irrationality of humans need to be factored into consequentialist decision making. I've become more and more convinced that values and social norms matter much more than I had previously thought.

I think this post is confused on a number of levels.

First, as far as ideal behavior is concerned integrity isn't a relevant concept. The ideal utilitarian agent will simply always behave in the manner that optimizes expected future utility factoring in the effect that breaking one's word or other actions will have on the perceptions (and thus future actions) of other people.

Now the post rightly notes that as a limited human agent we aren't truly able to engage in this kind of analysis. Both because of our computational limitations and our inability to perfectly deceive it is beneficial to adopt heuristics about not lying, stabbing people in the back etc.. (which we may judge to be worth abandoning in exceptional situations).

However, the post gives us no reason to believe it's particular interpretation of integrity "being straightforward" is the best such heuristic. It merely asserts the author's belief that this somehow works out to be the best.

This brings us to the second major point, even though the post acknowledges the very reason for considering integrity is that, "I find the ideal of integrity very viscerally compelling, significantly moreso than other abstract beliefs or principles that I often act on." the post proceeds to act as if it was considering what kind of integrity like notion would be appropriate to design into (or socially construct) in some alternative society of purely rational agents.

Obviously, the way we should act depends hugely on the way in which others will interpret our actions and respond to them. In the actual world WE WILL BE TRUSTED TO THE EXTENT WE RESPECT THE STANDARD SOCIETAL NOTIONS OF INTEGRITY AND TRUST. It doesn't matter if some other alternate notion of integrity might have been better to have if we don't show integrity in the traditional manner we will be punished.

In particular, "being straightforward" will often needlessly imperil people's estimation of our integrity. For example, consider the usual kinds of assurances we give to friends and family that we "will be there for them no matter what" and that "we wouldn't ever abandon them." In truth pretty much everyone, if presented with sufficient data showing their friend or family member to be a horrific serial killer with every intention of continuing to torture and kill people, would turn them in even in the face of protestations of innocence. Does that mean that instead of saying "I'll be there for you whatever happens" we should say "I'll be there for you as long as the balance of probability doesn't suggest that supporting you will cost more than 5 QALYs" (quality adjusted life years)?

No, because being straightforward in that sense causes most people to judge us as weird and abnormal and thereby trust us less. Even though everyone understands at some level that these kind of assurances are only true ceterus parabus actually being straightforward about that fact is unusual enough that it causes other people to suspect that they don't understand our emotions/motivations and thus give us less trust.

In short: yes, the obvious point that we should adopt some kind of heuristic of keeping our word and otherwise modeling integrity is true. However, the suggestion that this nice simple heuristic is somehow the best one is completely unjustified.

I apologize in advance if I'm a bit snarky.

This view is not broadly accepted amongst the EA community. At the very least, this view is self-defeating in the following sense: such an "ideal utilitarian" should not try to convince other people to be an ideal utilitarian, and should attempt to become a non-ideal utilitarian ASAP (see e.g. Parfit's hitchhiker for the standard counterexample, though obviously there are more realistic cases).

I argued for my conclusion. You may not buy the arguments, and indeed they aren't totally tight, but calling it "mere assertion" seems silly.

This is neither true, nor what I said.

This is what it looks like when something is asserted without argument.

I do agree roughly with this sentiment, but only if it is interpreted sufficiently broadly that it is consistent with my post.

I tried to spell out pretty explicitly what I recommend in the post, right at the beginning ("when I imagine picking an action, I pretend that picking it causes everyone to know that I am the kind of person who picks that option"), and it clearly doesn't recommend anything like this.

You seem to use "being straightforward" in a different way than I do. Saying "I'll be there for you whatever happens" is straightforward if you actually mean the thing that people will understand you as meaning.

Re your first point yup they won't try to recruit others to that belief but so what? That's already a bullet any utilitarian has to bite thanks to examples like the aliens who will torture the world if anyone believes utilitarianism is true or ties to act as of it is. There is absolutely nothing self defeating here.

Indeed if we define utilitarianism as simply the belief that ones preference relation on possible worlds is dictated by the total utility in then it follows by definition that the best act an agent can take are just the ones which maximize utility. So maybe the better way to phrase this is as: why care what the agent who pledges to utilitarianism in some way and wants to recruit others might need to do or act that's a distraction from the simple question of what in fact maximizes utility. If that means convincing everyone not to be utilitarians then so be it.

--

And yes re the rest of your points I guess I just don't see why it matters what would be good to do if other agents respond in some way you argue would be reasonable. Indeed, what makes consequentialism consequentialism is that you aren't acting based on what would happen if you imagine interacting with idealized agents like a Kantianesque theory might consider but what actually happens when you actually act.

I agree the caps were aggressive and I apologize for that and I agree I'm not trying to produce evidence which says that in fact how people respond to supposed signals of integrity tends to match what they see as evidence you follow the standard norms. That's just something people need to consult their own experience and ask themselves if, in their experience, thay tends to be true. Ultimately I think that it's just not true that a priori analysis of what should make people see you as trustworthy or have any other social reaction is a good guide to what they will do?

But I guess that is just going to return to point 1 and our different conceptions of what is utilitarianism requires.

"WE WILL BE TRUSTED TO THE EXTENT WE RESPECT THE STANDARD SOCIETAL NOTIONS OF INTEGRITY AND TRUST"

I think there is a lot to this, but I feel it can be subsumed into Paul's rule of thumb:

Because following standard social rules that everyone assumes to exist is an important part of being able to coordinate with others without very high communication and agreement overheads, you want to at least meet that standard (including following some norms you might have reservations about). Of course this doesn't preclude you meeting a higher standard if having a reputation for going above and beyond would be useful to you (as Paul argues it often is for most of us).

What an interesting area of debate. I want to add my views, with a language and experience from beyond EA, and welcome any kind of response.

As a leadership coach, I hold that my principal aim in coaching is to deepen my clients' ability to act from the greatest personal integrity, on the basis that the greater their integrity, then the better the consequences of their actions are likely to be for themselves and others, and the whole world - along the EA principles of Do Good Better and Be Kind. People can sense integrity and it is closely bound up with trustworthiness. Yes, it can also be mis-judged by others.

As I read these posts I found myself looking at a headline in our local paper which said "My mum says to do everything with love". Simple. In my experience, greater integrity leads to greater understanding of love. As the Dalai Lama puts it: loving-kindness. My coaching method of increasing integrity is to challenge (to resolve) internal conflicts of belief, as this develops greater integration of the personality in the whole body-mind and spirit, rather than just continuing a rationalising argument in the head alone. Solve the war inside yourself, don't create it outside yourself.

I don't see the rationalisation of actions such as lying, deceit, revenge, shaming or punishment, even in part, as having any place whatsover in a person who wants to demonstrate integrity, love, Doing Good Better or Being Kind. These are unintegrated actions, coming from limiting personal beliefs, which just produce an escalation in lowering standards of behaviour, increasing the harms, and making the world a worse place for everyone. The problem is that the world seems to be adopting such principles, including non-compliance with the law.

The first post seems to be written on the premise that the purpose of integrity is to enhance one's own reputation, rather than the better consequentialist purpose of Doing Good Better. But reputation is entirely subjective within the eye of the other beholder, whereas integrity is entirely subjective within the control of the subject, even though the ultimate consequences might be beyond control.

The problem with concepts like acting out of revenge, or indeed offence, is that they are usually based upon subjective and irrational emotions, devoid of care about the important details, such as ascertaining the true facts, compliance with the rule of law, and the fact that perceptions differ. In any action, whether intended to be good or bad, we cannot predict how others will perceive and respond to our actions. "Others" may just project their own lack of integrity onto us as the "subject", to make us into "perpetrator" to fit their "victim" belief in themselves. The smart response to perceived harm is to see the crisis as an opportunity for active resolution for greater good, not as a self-appointed victim who responds with greater self-justified harm.

Unfortunately, this is not how our current major national leaders act, because they appear not to have done the necessary personal introspection to see the value of acting out of integrity and loving-kindness. Their knee-jerk reaction is to use greater military power to try to destroy their unintegrated sense of "evil" that they project onto others.

Our system for selecting our leaders, at every level, in every institution, is outdated and entirely corrupt (for evidence, read The Dictator's Handbook). Given that it is the most powerful ones who create (and continue) the greatest man-made global existential risks (whilst supposedly being responsible for "Peace & Security" on our planet), personally, I would love to see EA rationalise about how best to change this horrible system which produces the most dangerously corrupt leaders desperately lacking with integrity. And then ACT to create anew. Develop the DAOs?

Can you show an example of how this set of rules helps you to "rexover the ability to engage in normal levels of being a jerk when it's actually a good idea"?

Suppose I am considering saying something mean about someone in a context where they won't hear me, and I would be unwilling to say the same thing to their face. I have a hard time with this in general. But there are cases where it is OK according to this heuristic (when they'd be fine knowing that I would say that kind of thing about them under those conditions), and I think those are the cases that I endorse-on-reflection.

The first half of your essay (your method of only deceiving when it would still make sense to deceive if people knew you were such a deceiver) looks entirely disjoint from second half. In what way do the graphs, the reasons for being honest, etc., support this particular mindset that you have chosen? They just give complicated consequentialist reasons for being honest, which seems to be what you were trying to avoid in the first place.

I don't think the graph makes anything clearer. Are we assuming that you're holding the benefits of deceit fixed? Because that changes a lot of things. We can't decide whether or not deceit is a good idea without having the expected value of deceit.

Why are you marking the typical thought experiment as having a very low cost of discovery? I would think that many typical thought experiments could have a very high cost of discovery - they could reference serious transgressions where large amounts of money, national secrets, lives, etc are at stake and where you might be seen as very immoral for not being honest despite the greater good of your actions. So the cost of discovery would be high yet the probability of discovery would be zero in such a thought experiment. On the other hand, there could be plenty of instances in our lives where we are likely to be discovered yet the cost of discovery is low. For instance, Wikipedia canvassing, or something along those lines.

So I don't see what this line is doing in the two-dimensional space of possibilities. Why do you assume that all instances of deceit take place along this line?

Maybe you're saying that if you hold almost everything constant, then people's reaction to somebody else's deceit depends on how likely they were to be discovered? But it's not clear that it's a large factor. For one thing, people's emotional attitudes to something like this are complex dispositions, not clear functions, and we're contradictory and flawed reasoners. For another, I can't even tell if we do care about someone's expectation of being discovered in our judgements upon those who have committed deceit. Yes, technically it makes sense to deter people more from concealable behavior, but only on a utilitarian principle of punishment does that make sense - which is far from a close approximation of people's emotional response to deceit. It's not a factor in retributive accounts of punishment nor does it play into accounts of moral blameworthiness as far as I know.

I don't see how you even arrived at the shape of the line. You draw it as upward-sloping, but in your bullet-points you give reasons to believe that it would be downward-sloping. You seem to think that these bullets make it more hyperbolic than linear but I don't see how you arrived at that conclusion from the bullet points, which quite clearly imply that the line would just slope downward rather than upward. You assume that the bullet points modulate the interior of the line but not the end points, which is just weird to me.

Also, let me clarify how a thought experiment works. It's not supposed to provide a guide to effective behavior in iterated games or anything like that. A thought experiment works as a philosophical investigation of an underlying principle. The philosophical investigation will leave us with a general principle about ethical value. Then we'll look at empirical information in order to pursue the goal. Usually, however, people don't use thought experiments to argue that consequentialists should lie. The argument for being deceitful would just be that it's what consequentialism demands, so if consequentialism is true, then we ought to lie (sometimes). It doesn't take a special argument from thought experiments to establish that. So let's say we agree that we should do whatever maximizes the best consequences. We'll conduct an empirical investigation of when and how lying maximizes consequences. To a large extent, it will depend on the expected benefit of lying. And it seems unlikely to me that you will be able to find a universal rule for summarizing the right way to behave.

The y axis is the cost of being a jerk, which is (presumably) higher if people are more likely to notice. In particular, it's not the cost of being perceived as a jerk, which (I argue) should be downward sloping.

(It seems like your other confusions about the graphs come from the same miscommunication, sorry about that.)

This is a post about how I think people ought to act in plausible situations. Thought experiments can cast light on that question to the extent they bear relevant similarities to plausible situations. The relationship between thought experiments and plausible situations becomes relevant if we are trying to make inferences about what we should do in plausible situations.

I agree that there are other philosophical questions that this post does not speak to.

I agree that we won't be able to find universal rules. I tried to give a few arguments for why the correct behavior is less sensitive to context than you might expect, such that a simple approximation can be more robust than you would think. (I don't seem to have successfully communicated to you, which is OK. If these aspects of the post are also confusing to others then I may revise them in an attempt to clarify.)

Okay, well the problem here is that it assumes that people have transparent knowledge about what the probability of being discovered is. In reality we can't infer well at all how likely someone thought it was for them to get caught. I think we often see rule breakers as irrational people who just assume that they won't get caught. So I take issue with the approach of taking the amount of disapproval you will get from being a jerk and whittling it down to such a narrow function based on a few ad hoc principles.

I'd suggest a more basic view of psychology and sociology. Trust is hard to build and once someone violates trust then the shadow of doing so stays with them for a long time. If you do one shady thing once and then apologize and make amends for it then you can be forgiven (e.g. Givewell) but if you do shady things repeatedly while also apologizing repeatedly then you're hosed (e.g. Glebgate). So you get two strikes, essentially. Therefore, definitely don't break your trust, but then again if you have the reputation for it anyway then it's not as big a deal to keep it up.

But whichever way you explain it, you're still just doing the consequentialist calculus. And you still have to think about things in individual situations which are unusual. Moreover, you've still done nothing to actually support the proposed rule in the first half of the post.

Ok, but you're not actually answering the philosophical issue, and people don't seem to think by way of thought experiment in their applied ethical reasoning so it's a bit of an odd way of discussing it. You could just as easily ignore the idea of the thought experiment and simply say "here's what the consequences of honesty and deceit are."

This seems to be an interesting approach to this question. However, for a top level post in this forum, I would like to see more of an attempt to link this directly to effective altruism, which, as many have noted, is not simply consequentialism. There is no mention of 'effective altruism', 'charity', 'career', 'poverty', 'animal' or 'existential risk' (of course effective altruism is broader than these things, but I think this is indicative).

(Writing in a personal capacity)

Effective altruism is strongly linked with consequentialism, so much so, that I don't think a more explicit link is required.

I found Paul's post useful, but I think it would have been good to point out that EA is not a type of consequentialism, since that's a misconception I think we should try to stamp out.