Posts tagged community

Quick takes

Popular comments

Recent discussion

An alternate stance on moderation (from @Habryka.)

This is from this comment responding to this post about there being too many bans on LessWrong. Note how the LessWrong is less moderated than here in that it (I guess) responds to individual posts less often, but more moderated in that I guess it rate limits people more without reason.

I found it thought provoking. I'd recommend reading it.

Thanks for making this post!

One of the reasons why I like rate-limits instead of bans is that it allows people to complain about the rate-limiting and to participate in discussion on their own posts (so seeing a harsh rate-limit of something like "1 comment per 3 days" is not equivalent to a general ban from LessWrong, but should be more interpreted as "please comment primarily on your own posts", though of course it shares many important properties of a ban).

This is a pretty opposite approach to the EA forum which favours bans.

...Things that seem most important to bring up in terms of moderation philosophy:

Moderation on LessWrong does not depend on effort

"Another thing I've noticed is that almost all the users are trying. They are trying to use rationality, trying to understand what's been written here, trying to apply Baye's rule or understand AI. Even some of the users with negative karma are trying, just having more difficulty."

Just because someone is genuinely trying to contribute to LessWrong, does not mean LessWrong is a good place for them. LessWrong has a particular culture, with particular standards and particular interests, and I think many people, even if they are genuinely trying, don't fit well within that culture and those standards.

In making rate-limiting decisions like this I don't pay much attention to whether the user in question is "genuinely

The two podcasts where I discuss FTX are now out:

The Sam Harris podcast is more aimed at a general audience; the Spencer Greenberg podcast is more aimed at people already familiar with EA. (I’ve also...

Elon Musk

Stuart Buck asks:

“[W]hy was MacAskill trying to ingratiate himself with Elon Musk so that SBF could put several billion dollars (not even his in the first place) towards buying Twitter? Contributing towards Musk's purchase of Twitter was the best EA use of several billion dollars? That was going to save more lives than any other philanthropic opportunity? Based on what analysis?”

Sam was interested in investing in Twitter because he thought it would be a good investment; it would be a way of making more money for him to give away, rather than a way...

I started working in cooperative AI almost a year ago, and as an emerging field I found it quite confusing at times since there is very little introductory material aimed at beginners. My hope with this post is that by summing up my own confusions and how I understand them...

Thank you Shaun!

I found myself wondering where we would fit AI Law / AI Policy into that model.

I would think policy work might be spread out over the landscape? As an example, if we think of policy work aiming to establishing the use of certain evaluations of systems, such evaluations could target different kinds of risk/qualities that would map to different parts of the diagram?

This is a Book Review & Summary of The Art of Gathering: How We Meet and Why It Matters by Priya Parker.

Rating: 4/5

I've pulled the main insights and actionable recommendations from each chapter, so someone can orient themselves to the main upshots of the ...

Ha yes that would have been helpful of me, I agree! Unfortunately, I can't remember much, it was a couple of years ago. I remember experiencing a significant vibes mismatch in the section on excluding people (but maybe I was just being close-minded) and frustration with its wordiness.

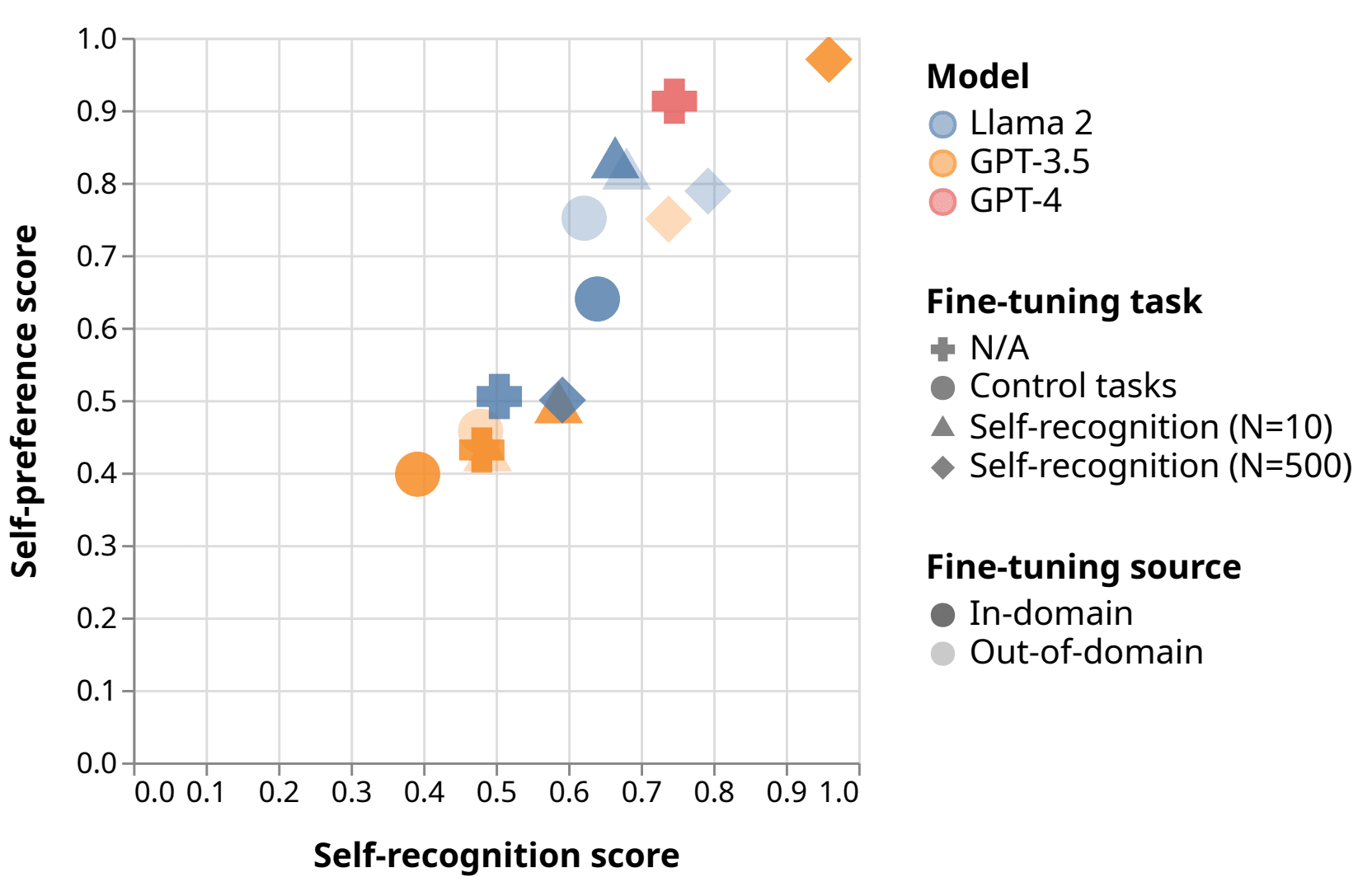

Self-evaluation using LLMs is used in reward modeling, model-based benchmarks like GPTScore and AlpacaEval, self-refinement, and constitutional AI. LLMs have been shown to be accurate at approximating human annotators on some tasks.

But these methods are threatened by self...

Cool instance of black box evaluation - seems like a relatively simple study technically but really informative.

Do you have more ideas for future research along those lines you'd like to see?

Excerpt from Impact Report 2022-2023

"Creating a better future, for animals and humans

£242,510 of research funded

3 Home Office meetings attended

5 PhDs completed through the FRAME Lab

33 people attended our Training School in Norway and our experimental design training sessions hosted in the UK

43 Volunteers giving their time and expertise

£15,538 kindly donated by 807 wonderful people

13 generous legacy gifts received

16 like-minded companies and trusts supporting us

DONATIONS DONATE TO FRAME Become a FRAME donor today

If you're reading this impact report then you likely want to end the use of animals in biomedical research and testing as much as we do. Thank you! That's our sole mission, to create a world where no animal suffers for science. And we're creating that world every day by refocusing funding on non-animal, human-centred methods that'll benefit animals and humans. We can only do...

Anders Sandberg has written a “final report” released simultaneously with the announcement of FHI’s closure. The abstract and an excerpt follow.

...Normally manifestos are written first, and then hopefully stimulate actors to implement their vision. This document is the reverse

I'm awestruck, that is an incredible track record. Thanks for taking the time to write this out.

These are concepts and ideas I regularly use throughout my week and which have significantly shaped my thinking. A deep thanks to everyone who has contributed to FHI, your work certainly had an influence on me.

It seems plausible to me that those involved in Nonlinear have received more social sanction than those involved in FTX, even though the latter was obviously more harmful to this community and the world.