Posts tagged community

Quick takes

Popular comments

Recent discussion

Self-evaluation using LLMs is used in reward modeling, model-based benchmarks like GPTScore and AlpacaEval, self-refinement, and constitutional AI. LLMs have been shown to be accurate at approximating human annotators on some tasks.

But these methods are threatened by self...

Summary

- Many views, including even some person-affecting views, endorse the repugnant conclusion (and very repugnant conclusion) when set up as a choice between three options, with a benign addition option.

- Many consequentialist(-ish) views, including many person-affecting

Then, I think there are ways to interpret Dasgupta's view as compatible with "ethics being about affecting persons", step by step:

- Step 1 rules out options based on pairwise comparisons within the same populations, or same number of people. Because we never compare existence to nonexistence — we only compare the same people or with the same number like in nonidentity — at this step, this step is arguably about affecting persons.

- Step 2 is just necessitarianism on the remaining options. Definitely about affecting persons.

These other views also seem compatible...

Anders Sandberg has written a “final report” released simultaneously with the announcement of FHI’s closure. The abstract and an excerpt follow.

...Normally manifestos are written first, and then hopefully stimulate actors to implement their vision. This document is the reverse

From Bostrom's website, an updated "My Work" section reads:

...... That’s why I founded the Future of Humanity Institute at Oxford University in 2005. FHI brought together an interdisciplinary bunch of brilliant (and eccentric!) minds, and sought to shield them as much as possible from the pressures of regular career academia; and thus were laid the foundations for exciting new fields of study.

Those were heady years. FHI was a unique place - extremely intellectually alive and creative - and remarkable progress was made. FHI was also quite fertile, spawning a n

Super broad question, I know.

I've been going down the rabbit hole of critical psychiatry lately and I'm finding it fascinating. Parts of it seem convincing and anecdotally align with my (admittedly extensive) interactions with the psychiatric system. But the evidence in both directions seems very cherry-picked and I haven't found an overall balanced view of both sides of the argument, and I'd like to be better informed, both to make personal decisions on the matter and to potentially advocate for evidence-based mental health policies.

Has anyone done (or is anyone interested enough to do) a somewhat thorough literature review on the effectiveness of things like medication, psychiatric hospitalizations, etc.?

Things I've been looking at that I'd be interested in a critical evaluation of:

...Open Philanthropy’s “Day in the Life” series showcases the wide-ranging work of our staff, spotlighting individual team members as they navigate a typical workday. We hope these posts provide an inside look into what working at Open Phil is really like. If you’re interested in joining our team, we encourage you to check out our open roles.

Alex Bowles is a Senior Program Associate on Open Philanthropy’s Science and Global Health R&D team[1], and a member of the Global Health and Wellbeing Cause Prioritization team. His responsibilities include estimating the cost-effectiveness of research and development grants in science and global health, identifying and assessing new strategic areas for the team, and investigating new Open Phil cause areas within global health and wellbeing.

Day in the Life

I’m part of the ~70% of Open Phil staff who work...

Identity

In theory of mind, the question of how to define an "individual" is complicated. If you're not familiar with this area of philosophy, see Wait But Why's introduction.

I think most people in EA circles subscribe to the computational theory of mind, which means that...

By inference, if you are one of those copies, the 'moral worth' of your own perceived torture will therefore be 1/10billionth of its normal level. So, selfishly, that's a huge upside - I might selfishly prefer being one of 10 billion identical torturees as long as I uniquely get a nice back scratch afterwards, for e.g.

How bad would it be to cause human extinction? ‘'If we do not soon destroy ourselves’, write Carl Sagan and Richard Turco, ‘but instead survive for a typical lifetime of a successful species, there will be humans for another 10 million years or so. Assuming that our lifespan...

Do you intend for the population to recover in B, or extinction with no future people? In the post, you write that the second virus "will kill everybody on earth". I'd assume that means extinction.

If B (killing 8 billion necessary people) does mean extinction and you think B is better than A, then you prefer extinction to extra future deaths. And your argument seems general, e.g. we should just go extinct now to prevent the deaths of future people. If they're never born, they can't die. You'd be assigning negative value to additional deaths, but no positiv...

Ethical Implications of AI in Military Operations: A Look at Project Nimbus

Recently, 'Democracy Now' highlighted Google’s involvement in Project Nimbus, a $1.2 billion initiative to provide cloud computing services to the Israeli government, including military applications. Google employees have raised concerns about the use of AI in creating 'kill lists' with minimal human oversight, as well as the usage of Google Photos to identify and detain individuals. This raises ethical questions about the role of AI in warfare and surveillance.

Despite a sit-in and retaliation against those speaking against the project, there has been little visible impact on the continuation of the contract. The most recent protesters faced arrest. What does this suggest about the power of AI in the hands of governments and the efficacy of public dissent in influencing such high-stakes deployments of AI use?

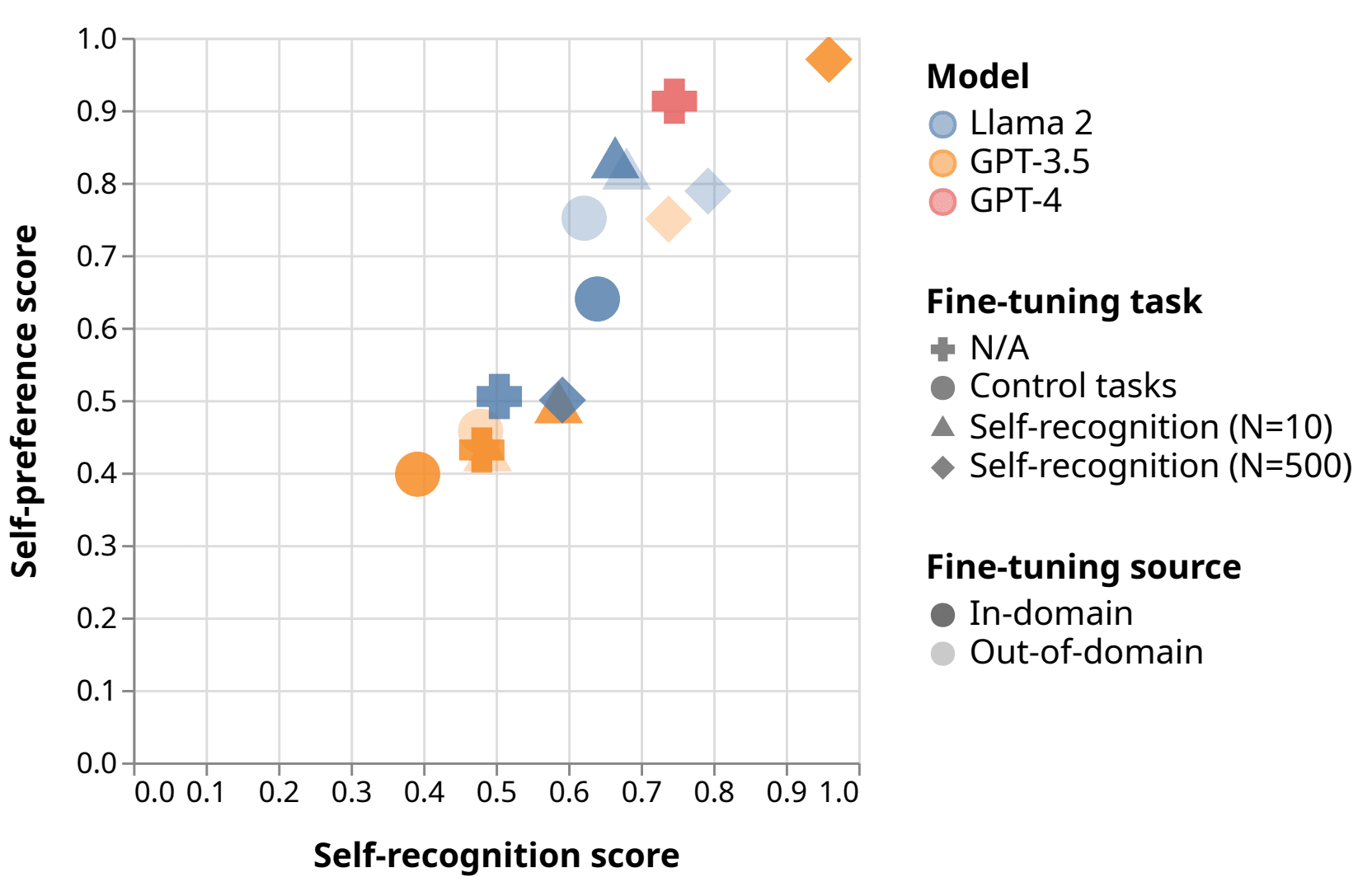

Interesting. I think I can tell an intuitive story for why this would be the case, but I'm unsure whether that intuitive story would predict all the details of which models recognize and prefer which other models.

As an intuition pump, consider asking an LLM a subjective multiple-choice question, then taking that answer and asking a second LLM to evaluate it. The evaluation task implicitly asks the the evaluator to answer the same question, then cross-check the results. If the two LLMs are instances of the same model, their answers will be more strongly cor... (read more)