Posts tagged community

Quick takes

Popular comments

Recent discussion

Anders Sandberg has written a “final report” released simultaneously with the announcement of FHI’s closure. The abstract and an excerpt follow.

...Normally manifestos are written first, and then hopefully stimulate actors to implement their vision. This document is the reverse

Open Philanthropy’s “Day in the Life” series showcases the wide-ranging work of our staff, spotlighting individual team members as they navigate a typical workday. We hope these posts provide an inside look into what working at Open Phil is really like. If you’re interested in joining our team, we encourage you to check out our open roles.

Alex Bowles is a Senior Program Associate on Open Philanthropy’s Science and Global Health R&D team[1], and a member of the Global Health and Wellbeing Cause Prioritization team. His responsibilities include estimating the cost-effectiveness of research and development grants in science and global health, identifying and assessing new strategic areas for the team, and investigating new Open Phil cause areas within global health and wellbeing.

Day in the Life

I’m part of the ~70% of Open Phil staff who work...

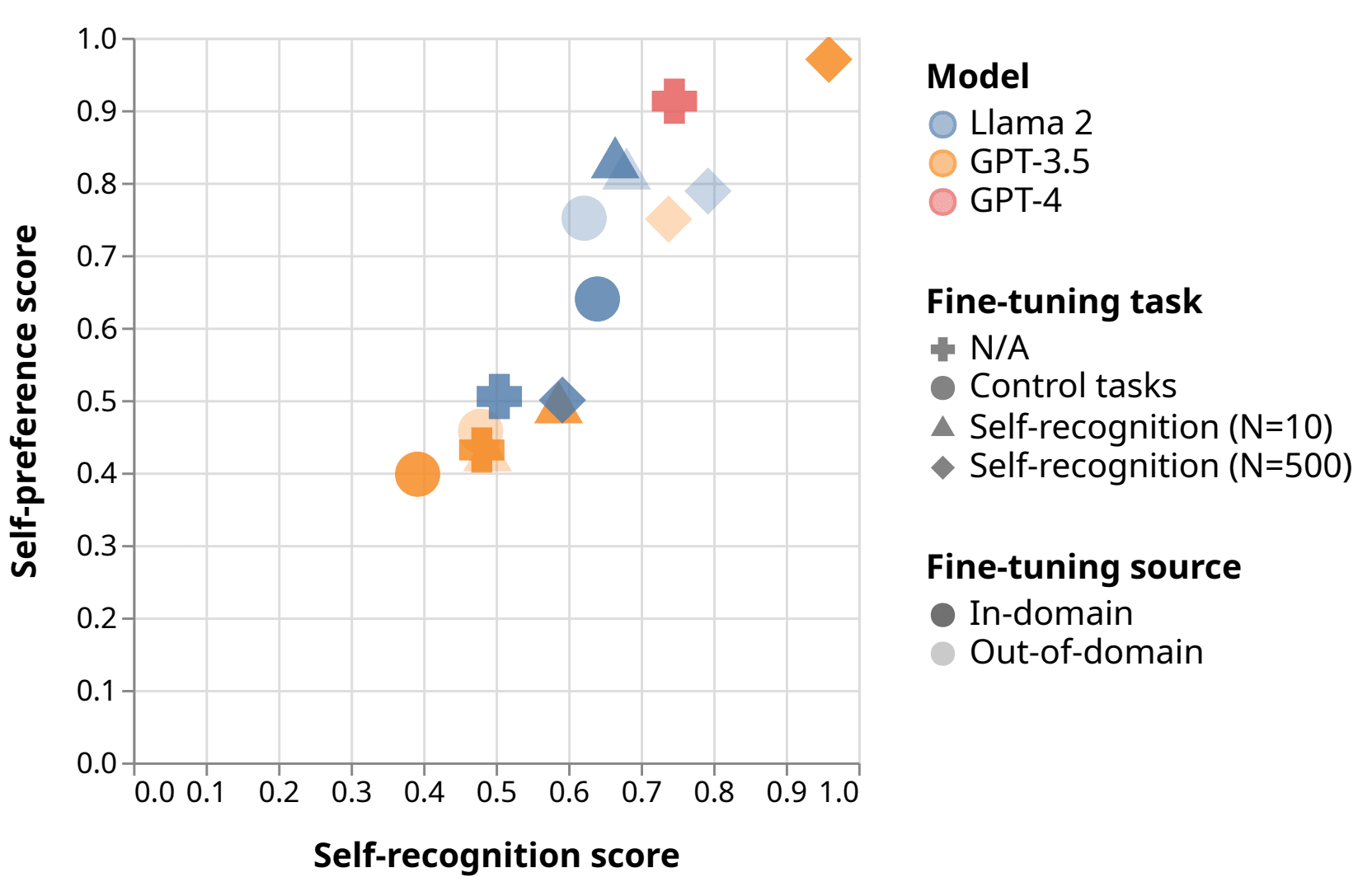

Self-evaluation using LLMs is used in reward modeling, model-based benchmarks like GPTScore and AlpacaEval, self-refinement, and constitutional AI. LLMs have been shown to be accurate at approximating human annotators on some tasks.

But these methods are threatened by self-preference, a bias in which an LLM evaluator scores its own outputs higher than than texts written by other LLMs or humans, relative to the judgments of human annotators. Self-preference has been observed in GPT-4-based dialogue benchmarks and in small models rating text summaries.

We attempt to connect this to self-recognition, the ability of LLMs to distinguish their own outputs from text written by other LLMs or by humans.

We find that frontier LLMs exhibit self-preference and self-recognition ability. To establish evidence of causation between self-recognition and self-preference, we fine-tune GPT-3.5 and Llama-2-7b evaluator...

Identity

In theory of mind, the question of how to define an "individual" is complicated. If you're not familiar with this area of philosophy, see Wait But Why's introduction.

I think most people in EA circles subscribe to the computational theory of mind, which means that...

By inference, if you are one of those copies, the 'moral worth' of your own perceived torture will therefore be 1/10billionth of its normal level. So, selfishly, that's a huge upside - I might selfishly prefer being one of 10 billion identical torturees as long as I uniquely get a nice back scratch afterwards, for e.g.

How bad would it be to cause human extinction? ‘'If we do not soon destroy ourselves’, write Carl Sagan and Richard Turco, ‘but instead survive for a typical lifetime of a successful species, there will be humans for another 10 million years or so. Assuming that our lifespan...

Do you intend for the population to recover in B, or extinction with no future people? In the post, you write that the second virus "will kill everybody on earth". I'd assume that means extinction.

If B (killing 8 billion necessary people) does mean extinction and you think B is better than A, then you prefer extinction to extra future deaths. And your argument seems general, e.g. we should just go extinct now to prevent the deaths of future people. If they're never born, they can't die. You'd be assigning negative value to additional deaths, but no positiv...

Ethical Implications of AI in Military Operations: A Look at Project Nimbus

Recently, 'Democracy Now' highlighted Google’s involvement in Project Nimbus, a $1.2 billion initiative to provide cloud computing services to the Israeli government, including military applications. Google employees have raised concerns about the use of AI in creating 'kill lists' with minimal human oversight, as well as the usage of Google Photos to identify and detain individuals. This raises ethical questions about the role of AI in warfare and surveillance.

Despite a sit-in and retaliation against those speaking against the project, there has been little visible impact on the continuation of the contract. The most recent protesters faced arrest. What does this suggest about the power of AI in the hands of governments and the efficacy of public dissent in influencing such high-stakes deployments of AI use?

Hi everyone, I am a high school senior extremely passionate about EA and hoping to actively engage with EA before the start of college. I'm wondering if there are remote EA related volunteer opportunities available to high schoolers. My goal is to acquire hands-on...

See https://ea-internships.pory.app/board, you can filter for volunteer.

It would be helpful to mention if you have background or interest in particular cause areas.

I'm posting this to tie in with the Forum's Draft Amnesty Week (March 11-17) plans, but it is also a question of more general interest. The last time this question was posted, it got some great responses.

This post is a companion post for What posts are you thinking...

That's sad. For anyone interested in why they shut down (I'd thought they had an indefinitely sustainable endowment!), the archived version of their website gives some info: